Inception_v3

import torch

model = torch.hub.load('pytorch/vision:v0.10.0', 'inception_v3', pretrained=True)

model.eval()

所有预训练模型都要求输入图像以相同的方式进行归一化,即由形状为 (3 x H x W) 的 3 通道 RGB 图像组成的小批量,其中 H 和 W 预计至少为 299。图像必须加载到 [0, 1] 的范围,然后使用 mean = [0.485, 0.456, 0.406] 和 std = [0.229, 0.224, 0.225] 进行归一化。

这是一个示例执行。

# Download an example image from the pytorch website

import urllib

url, filename = ("https://github.com/pytorch/hub/raw/master/images/dog.jpg", "dog.jpg")

try: urllib.URLopener().retrieve(url, filename)

except: urllib.request.urlretrieve(url, filename)

# sample execution (requires torchvision)

from PIL import Image

from torchvision import transforms

input_image = Image.open(filename)

preprocess = transforms.Compose([

transforms.Resize(299),

transforms.CenterCrop(299),

transforms.ToTensor(),

transforms.Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225]),

])

input_tensor = preprocess(input_image)

input_batch = input_tensor.unsqueeze(0) # create a mini-batch as expected by the model

# move the input and model to GPU for speed if available

if torch.cuda.is_available():

input_batch = input_batch.to('cuda')

model.to('cuda')

with torch.no_grad():

output = model(input_batch)

# Tensor of shape 1000, with confidence scores over ImageNet's 1000 classes

print(output[0])

# The output has unnormalized scores. To get probabilities, you can run a softmax on it.

probabilities = torch.nn.functional.softmax(output[0], dim=0)

print(probabilities)

# Download ImageNet labels

!wget https://raw.githubusercontent.com/pytorch/hub/master/imagenet_classes.txt

# Read the categories

with open("imagenet_classes.txt", "r") as f:

categories = [s.strip() for s in f.readlines()]

# Show top categories per image

top5_prob, top5_catid = torch.topk(probabilities, 5)

for i in range(top5_prob.size(0)):

print(categories[top5_catid[i]], top5_prob[i].item())

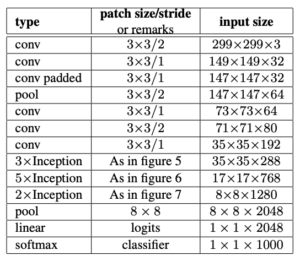

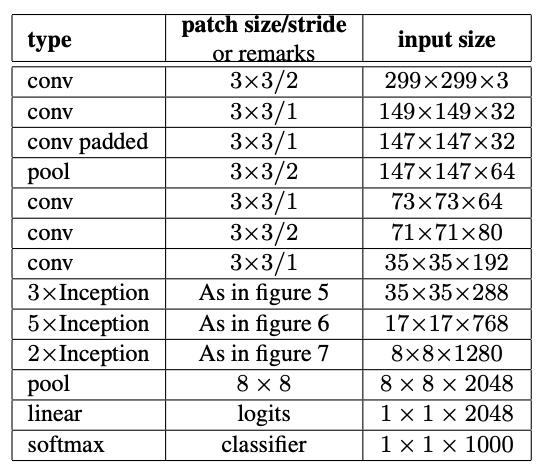

模型描述

Inception v3:基于对网络扩展方式的探索,旨在通过适当分解的卷积和积极的正则化尽可能高效地利用增加的计算。我们在 ILSVRC 2012 分类挑战验证集上对我们的方法进行了基准测试,结果表明,相对于最先进的技术取得了显著的进步:对于单帧评估,使用每次推理计算成本为 50 亿次乘加,参数少于 2500 万的网络,实现了 21.2% 的 top-1 错误和 5.6% 的 top-5 错误。通过 4 个模型的集成和多裁剪评估,我们在验证集上报告了 3.5% 的 top-5 错误(测试集上为 3.6% 的错误),在验证集上报告了 17.3% 的 top-1 错误。

下面列出了使用预训练模型在 ImageNet 数据集上的 1-裁剪错误率。

| 模型结构 | Top-1 错误率 | Top-5 错误率 |

|---|---|---|

| inception_v3 | 22.55 | 6.44 |

参考文献